No ROCKET Necessary: 3rd Party GPU Acceleration is Coming to REDCINE-X PRO

While proprietary used to be the name of the game, we are entering an age where camera systems and post solutions are choosing more open source options for maximum compatibility (and a likely wider install base as a result). Look no further than the increasing use of CinemaDNG with cameras like the Ikonoskop, Blackmagic Cinema Camera, and Digital Bolex. Now it looks like RED is at least partially entering that arena with an update to REDCINE-X PRO.

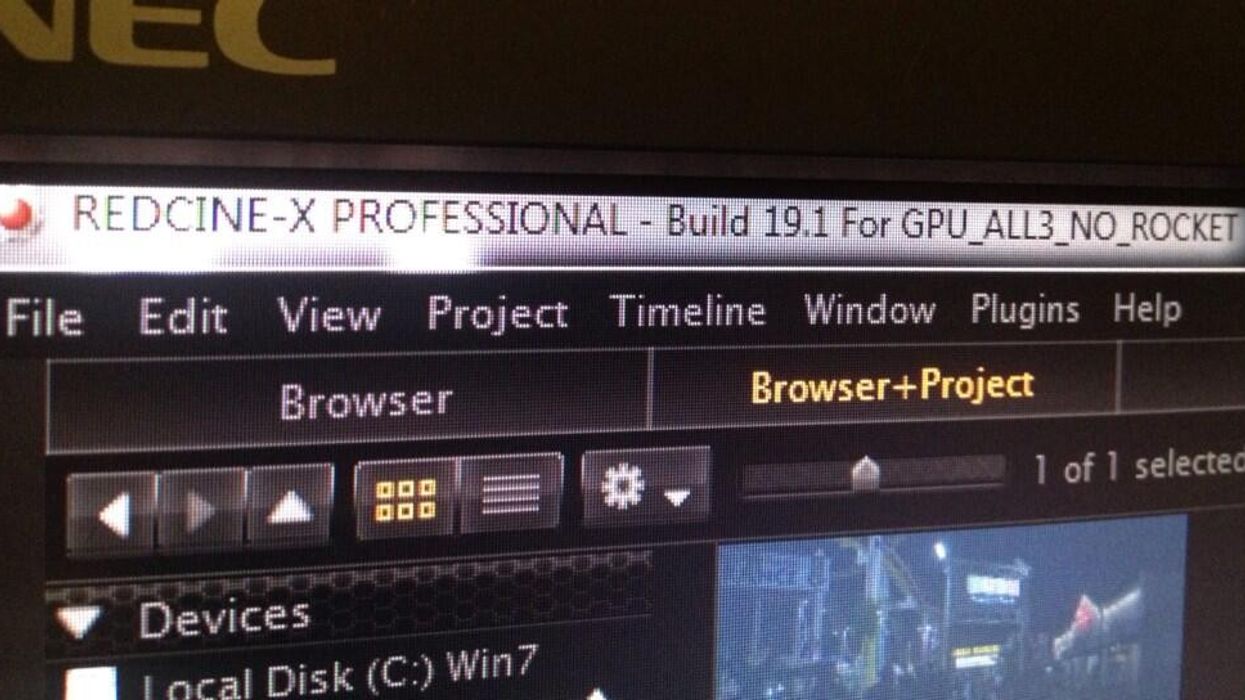

Up until now, a RED ROCKET was all but necessary for real-time performance as well as faster proxies in REDCINE-X PRO. This is fine for productions with huge budgets who are going to post-houses, but for smaller indie productions, a ROCKET card is about twice as expensive as a fast computer system alone. Jarred Land, who is now the public face of RED according to founder Jim Jannard, recently posted this tweet to REDUser from Bill Bennett, ASC which shows a new build of REDCINE-X PRO:

Jarred said this in the forum, and he also mentioned that having more than one GPU will increase performance:

Single Titan 6K @ 24fps. Rocket will be still be alot faster.. but it becomes more of a luxury rather than a necessity.

This is gigantic news for anyone who has dealt with RED workflows. Yes, you don't absolutely need a ROCKET card, but it makes for much longer transcoding times and you'll have to deal with lower-quality debayering performance for playback. It seems like the ROCKET will still be useful, but if you've got a decent GPU, it likely won't be worth the money to spring for the proprietary RED card once this new REDCINE-X comes out.

They were originally going to announce this at IBC, but obviously the cat has been let out of the bag early. I imagine that we will get our first DRAGON .R3Ds right around IBC if that's when the new version of RCX PRO is set to come out. It also coincides with the first DRAGON sensor upgrades for users, so it only makes sense that the software has to be public by then.

Bill also mentioned some other interesting stuff on Twitter:

Since the sensor is so clean with such a high signal to noise ratio, pushing it to higher ISOs doesn't add much of a noise penalty. To my knowledge, RED's not doing any gain on the sensor internally with DRAGON when you change ISO, so that would mean that the sensor is just that clean. This seems similar to when you're shooting RAW photos with DSLRs and the cameras are actually native at a lower ISO, but can be pushed a few stops to higher ISOs without much of a noise or dynamic range penalty.

The good thing with DRAGON being able to shoot at lower ISOs with the same dynamic range is that you can use a stop or two less neutral density outdoors. Also, if the dynamic range is really that high, it should be possible to bring it down to 100 ISO or lower and still keep dynamic range somewhere around the MX sensor.

Either way we should find out soon enough when we'll get the newest version of RCX and hopefully some DRAGON .R3Ds to play around with.

Links: