3 Benefits of 8K That Have Nothing to Do with Image Dimensions

Maybe large format matters, after all.

Whether or not 8K matters has been a hot topic since its emergence. During Camerimage 2017 in Poland, Michael Cioni (SVP of Innovation at Panavision & Light Iron), Dan Sasaki (VP of Optical Engineering, Panavision), and Ian Vertovec (Senior DI Colorist, Light Iron) discussed the benefits of shooting in large format (greater than 4K) video.

In their argument, they lay out three components of image quality using a triangle model where resolution is but one-third of the entire picture. They claim that, while resolution is certainly important, pixel density also provides for greater perspective and magnification—all of which help filmmakers tell their stories with more control and flexibility over the look of the final image. You can watch the entire, hour-long conversation here or read out three key takeaways below.

1. Resolution is not sharpness

Dan Sasaki asserts that, while we often use these terms interchangeably, they are in fact not the same. The reason that we think they are is due to the marketing efforts of camera manufacturers and what he says is, "poor education". Contrary to the way many of us speak about images, Sasaki argues that higher resolution images actually look smoother or softer, not sharper, than lower resolution images. One of the reasons for that is that there are more pixels available to form curves smoothly. He goes on to demonstrate in the slide shown above that, when viewed at a distance, people will mistake an image shot with a lower resolution camera as being actually sharper because of this.

So if higher resolution doesn't look sharper, what does it do? Sasaki makes the argument that because higher resolution sensors are able to resolve curved lines more smoothly, these sensors are able to lend dimensionality to the images they record that is greater and more lifelike than on less resolved sensors. This helps to fool our brains more into believing that the images we're seeing on screen more closely represent the subjects they portray. Furthermore, he makes the argument that because these qualities are "baked in" to the image, these enhancements are proportionately observable (compared to the same image shot with a less resolved sensor) whether they are displayed at native resolution or down-sampled to 4K, 2K, or HD.

2. Humans can see more than 4K

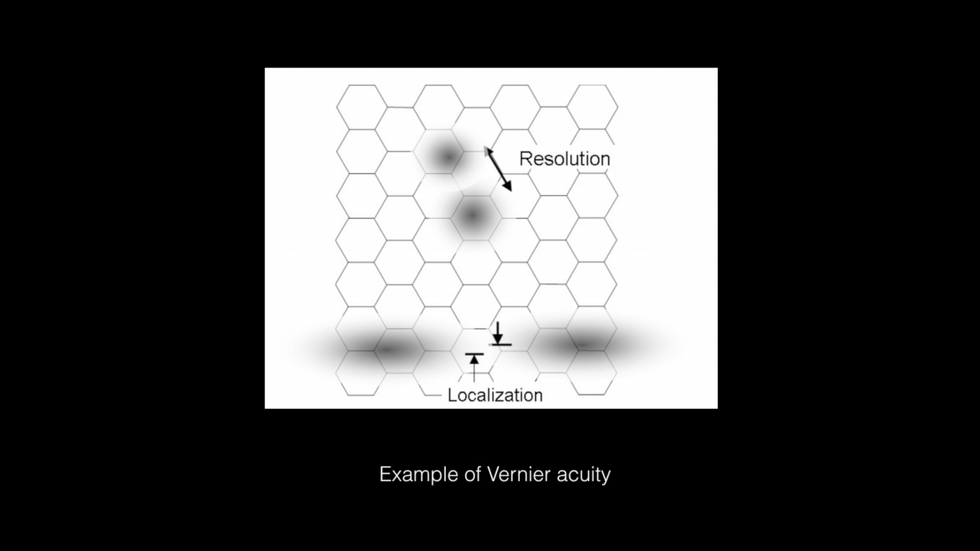

While it's been argued that the acuity of the human eye cannot detect smaller than one arc minute, Dan Sasaki argues that our brains give us hyperacuity that allows our brains to extrapolate visual information from extremely small bits of visual information (less than the diameter of a single rod or cone in our eyes). He suggests that we have three types of hyperacuity: Curvature Detection, Sharpness, and Stereo Acuity. This hyperacuity is used to help us detect things like curvature and the sharpness and smoothness of edges, which greatly helps us see and interpret images (like facial shape for example). By packing all of this sub-pixel information into the image, he argues that we are providing the viewer with much more information from which to render the images in their brains and this provides a sense of greater depth and more realism.

More Pixels Provide More Color InformationCredit: DXL

3. More pixels render better colors

In the same way that higher pixel density allows curved lines to look smoother and allows images to exhibit more depth, more pixels also help our eyes determine subtle color and gradient shifts. This translates into more lifelike and realistic coloring.

As shown in the image above, the hue changes accentuate the fact that the leaf has dimensionality front-to-back and top-to-bottom. As Sasaki argues throughout the panel discussion, it is not necessary to view high-resolution images on high-resolution screens in order for our eyes and brains to be able to benefit from the increased information captured by the higher resolution sensor.

Source: DXL Channel