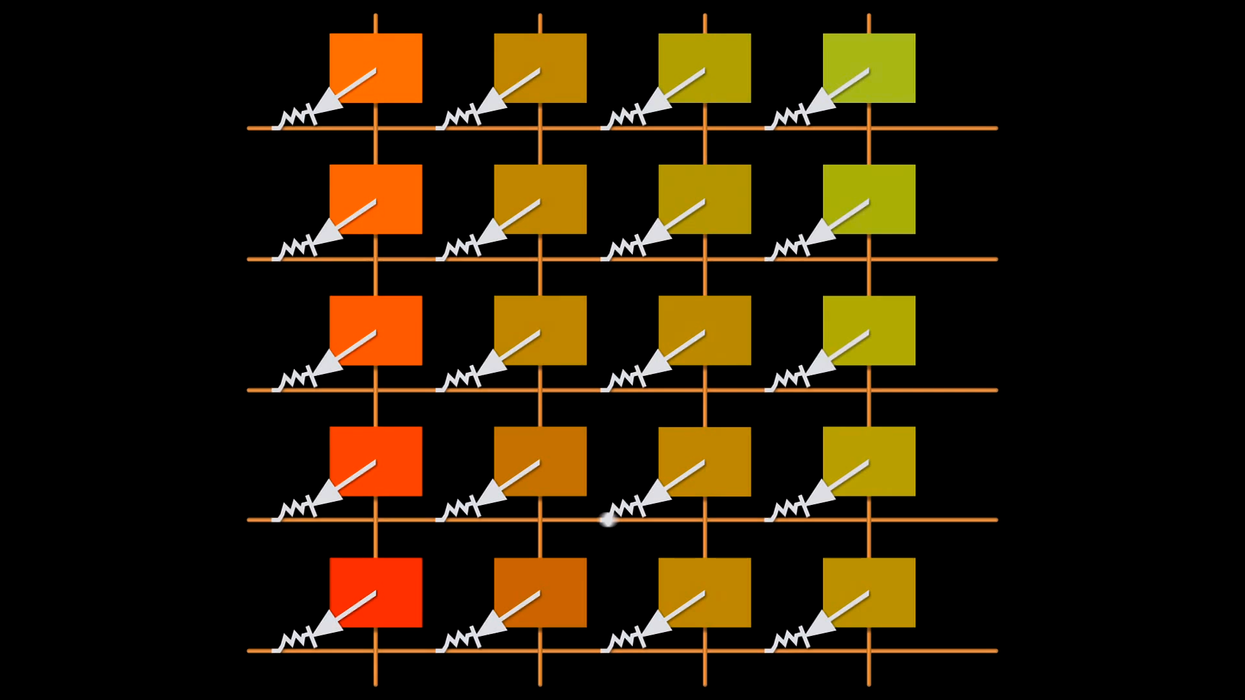

Do you know how your sensor translates light into the data that later becomes your images? How does the physical construction of your sensor affect how pixels get interpreted? This little video is a great introduction into how CCD (charge-coupled device) sensors work in a digital camera, and gives a peek into the cool stuff happening under our noses at 24fps:

These days CMOS sensors are as popular as CCD sensors (Canon DSLRs, RED's cameras, and Sony cameras like the FS100 and FS700 use CMOS sensors), but there are plenty of interesting cameras coming out with CCD sensors (the upcoming Digital Bolex will have one, as will the in-development Kineraw cameras). As filmmakers demand more access to the raw data flowing from sensors, it may become more and more important to understand just how that data is created and how it's being processed (or not) depending on the sensor technology and color filter array used. Even with my limited exposure to the "under-the-hood" world of image processing, this video linked neatly with what I'd read about Bayer filters and understanding how color channel information is determined.

It's always interesting to hear older cinematographers discuss the physical components of film, and explain how the physical make up of the film would affect image qualities like grain, light sensitivity, etc. I wonder if in the future we'll also have a similar appreciation of how different sensors created different images.

What do you think?

[via FilmmakerIQ]