What We Can Learn From the Immense Post-Production Pipeline of 'Avatar: The Way of Water’

Years in the making, Avatar: The Way of Water handled an immense amount of image data. This is how it was done and what you can learn.

The first Avatar set the bar on visual effects in stereoscopic 3D and, for several years, was the number-one movie at the box office. Avatar: The Way of Water premiered 13 years later and is wowing audiences with incredible new VFX, featuring water sequences that have never been seen before by anyone.

But how did the team manage such a complex production and post-production pipeline?

Viewing 4K, HDR, and HFR Stereoscopic 3D Footage on Set

James Cameron and his team decided to film the new Avatar predominantly in 47.952 fps, HDR, and stereoscopic 3D, which resulted in quite a lot of data to manage. They also shot in 3D 2K at both HFR (high frame rate) of 48p and also 24p. The most interesting thing was that some of these elements were projected in their native frame rates at the same time.

From there, the stereoscopic footage needed to go to Weta Digital to start creating the intricate VFX, along with color grading and more. It was up to Lightstorm Entertainment's Geoff Burdick, Senior Vice President of Production Services and Technology, to develop a solution to manage the epic post-production pipeline and also the tools the production and post teams used.

Some of the tools they used allowed the team to view footage in as close to a theatrical experience as possible, allowing them to make decisions on set, in real-time, according to Burdick.

"This saves time during shooting, benefits Weta Digital, our visual effects vendor, and helps streamline our post-production and mastering process," he explained.

This is also the sort of workflow that virtual production affords creatives and is quickly becoming more affordable to tech-savvy filmmakers using Unreal Engine.

But at the time, those tools didn't exist just yet, so Burdick reached out to Blackmagic Design early in the pre-production process to come up with a solution to view all of that 3D footage (48p and 24p in 4K, 2K, and even HD, plus HDR) via a live feed to their DCI-compliant 'production pod.' Before acquiring DaVinci Resolve and creating its own cameras, BMD offered (and still does) an amazing array of image pipeline tools.

"There were no instant answers, but they understood the vision and had ideas as to the best pathways to make it happen," Burdick said.

The team, led by Burdick and Robin Charters, a 3D Systems Engineer, worked with Blackmagic Design on the hardware solution to manage the various video feeds. It involved:

- Teranex AV standards converter

- Smart Videohub 12G 40x40 router

- DeckLink 8K Pro capture and playback card

- UltraStudio 4K Extreme three capture and playback device

- ATEM 4 M/E Broadcast Studio 4K live production switcher

Director Cameron, Cinematographer Russell Carpenter, and the production and post teams could watch the 3D, HFR, and HDR footage live on set and as close to the theatrical experience as possible.

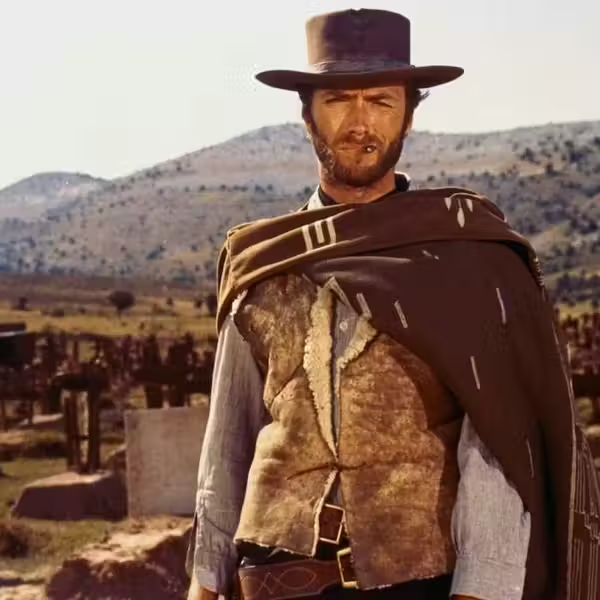

Filming Avatar: The Way of the Water in the enormous water tankCredit: Walt Disney Co

Color Grading a 3D Spectacle

The color grading was done by Tashi Trieu, who worked for Lightstorm Entertainment for several years, including Alita: Battle Angel. He was there in early 2019 for extensive 3D testing of the film, particularly for the water sequences where the production filmed in a massive 900,000-gallon tank.

Trieu and DI Editor Tom Willis used DaVinci Resolve for color grading and took advantage of the Resolve Python API to write a system for indexing VFX deliveries, helping to automate the workflow.

Once DI (digital intermediates) arrived, they could upload them, use an EDL, and in almost an instant, they had an updated layer of all the latest shots and begin final stereo reviews in scene context.

Credit: Walt Disney Co

"Weta Digitals' approach left a lot of creative latitude for us in the DI, and our show LUT is an elegantly simple S curve with a straightforward gamut mapping from SGamut3.Cine to P3D65" explained Trieu. This left the colorist with the flexibility to push the envelope of the Avatar sequel to photorealistic renditions and even a pastel look.

"Even on a state-of-the-art workstation with 4x A6000 GPUs, real-time performance is difficult to guarantee," said Trieu of working on the 3D HDR and HFR footage. "It’s a really delicate balance between what’s sustainable over the SAN’s bandwidth and what’s gentle enough for the system to decode quickly."

"Every shot was delivered as OpenEXR frames with as many as five or six layers of mattes for me to use in the grade."

'Avatar: The Way of Water'Credit: Walt Disney Co

Weta wrote the RGB layer as uncompressed data, but then ZIP compressed the mattes within the same file, improving playback performance and speeding up rendering for their deliverables. Plus, they saved space as file sizes didn't contain the mattes.

What Can We Learn?

While James Cameron's new sci-fi epic is a massive achievement for technology, everyday creatives can still earn a thing or two, especially since programs like DaVinci Resolve, Unreal Engine, and Blender are absolutely free (ish). Resolve Studio will run you about $295, and Unreal Engine will cost you some money if you're working on a big production for commercial purposes.

However, when utilizing these tools for VFX-laden projects, communication is key. Not only between the different creatives on your team but also between your software and hardware. Consider your workflow from beginning to end before you even set foot on set. Don't just plan your shots and pass on the memory card to post-production when you're done. There are affordable tools to get everything working in sync. But what if you're not utilizing VFX at all? How your image is captured and passed on to the next step in your pipeline is still critical. Consider using a cloud-based post-production workflow, such as Frame.io or Blackmagic Cloud.

Sometimes, filmmakers will focus only on the story, and while that's an absolute priority, you should not forget that your technology is the foundation that keeps your story moving forward. Don't be caught out in the cold when you wrap your production and realize you only have weeks to edit before a festival or delivery deadline. On top of that, everyone usually relaxes after a production wraps. But we all know that's when the next phase begins!

What did you learn from this Avatar: The Way of the Water workflow? Let us know in the comments!