There Is Finally a Solution to AI Plagiarism

Many teachers and professors think that using AI-generated text is a kind of plagiarism. Now, one college Senior has an app to prove who wrote the text.

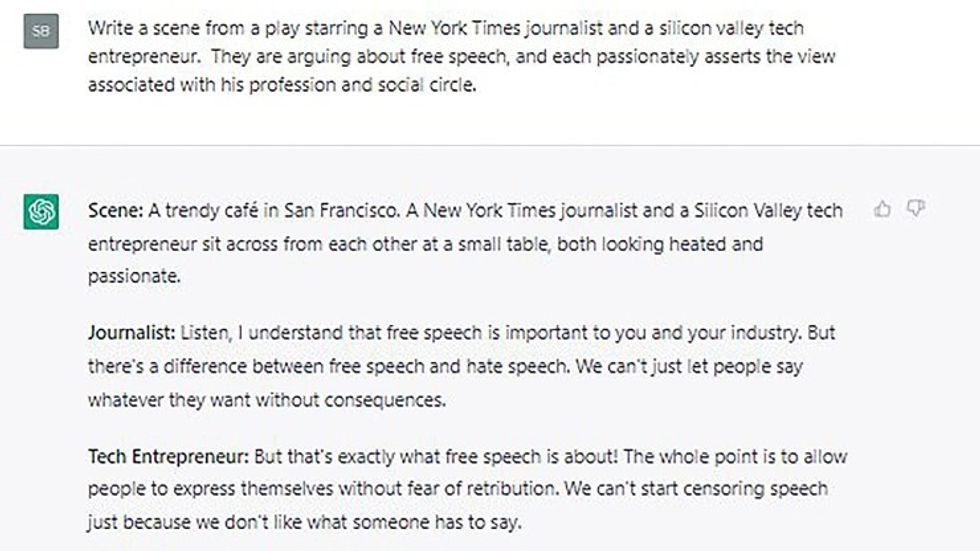

AI-generated text is all the rage. Chatbots like ChatGPT, Google’s LamDa, and others can create natural-sounding text are becoming so ubiquitous that students have looked to using artificial intelligence to write their term papers with scary good results. Since its debut late last year, ChatGPT has passed over a million users, and that is only the tip of the iceberg.

Moreover, Microsoft is investing over ten billion dollars into OpenAI, the creators of ChatGPT, with plans to integrate the chatbot into Microsoft Office. Kindle authors, as discussed here a few days ago, have begun using the chatbot to manage the rapid turnaround 4-6 week turn around of eBooks.

This so-called “AI plagiarism” is rapidly becoming a concern for educators, college professors, and professional writers who fear that people will use AI as a shortcut to get a leg up on their workloads. Until recently, there was no way to ascertain if the text was crafted by a human wordsmith or generated by a chatbot's artificial intelligence.

But this college student created a temporary solution that could help many educators, editors, and studio executives.

A Tool To Fight Back AI

Edward Tian, a college senior at Princeton University’s computer science program, created a tool that he says can “quickly and efficiently" analyze written text, and determine whether it was written by a human or a machine. Tian created the app over Winter Break, and released it on January 2nd.

Over thirty thousand users downloaded the app to analyze text in a week, with most of the downloads coming from teachers.

"The motivation here is increasing AI plagiarism,” Tian tweeted with his announcement. “Are high school teachers going to want students using ChatGPT to write their history essays? Likely not.”

Tian’s app, dubbed GPTZero, considers two metrics in analyzing text: its “perplexity” and its “burstiness.”

The perplexity of a text measures the complexity of the ideas written in the text based on its randomness. If GPTZero finds the written text to be too "perplexing," then it was likely written by a human being. If GPTZero can understand the written idea, then it was more likely written by a chatbot like ChatGPT.

The app can also measure the “burstiness” of a text. According to Tian, humans often write in spurts of short sentences, followed by longer sentences with more complex explanations and descriptions. In contrast, AI-written text tends to be more uniform. By analyzing these two metrics, GPTZero can determine the likelihood of whether the text was human or machine-derived, and it is already becoming very popular with educators to catch students in the act of cheating.

But could GPTZero be used by studios to determine if a book or screenplay had AI authorship disguised as an original work by a screenwriter or novelist? Screenwriter John August put this question to the test, and set up a text.

“I’m not sure [that] I could reliably spot the differences between sentences assembled by humans versus machines,” August wrote on his blog. “But maybe that’s just my human bias.”

August set up a “quick, not-at-all-scientific test” in which he tasked ChatGPT to write three paragraphs explaining what a manager does for screenwriters. The chatbot created the task in seconds, then August used Tian’s GPTZero to analyze them, comparing it to a human-generated explanation from Screenwriting.io.

While August was impressed by the natural-sounding explanations that ChatGPT had generated, Tian’s chatbot sleuth determined what was written by AI and which was writtenby a human. Next, August attempted to fool the tool by taking a few minutes to rewrite the AI-generated text, to give it a more human-sounding style. August reworded the descriptions, then ran the new text through GPTZero. The result was that the app gave it the human-crafted seal of approval.

The result is that chatbots like ChatGPT can help a writer create text on subjects they know little about, and then the writer can rewrite it in their voice and style so that it can pass muster with analysis tools designed to sniff out the AI writing assistant.

“GPTZero is looking for patterns a human likely wouldn’t notice, which makes sense,” August concludes in his blog. “But an AI model trained to provide responses with high perplexity and burstiness would likely evade detection. It’s interesting to see this arms race play out.”

While August believes that GPTZero will be able to sniff out AI plagiarism now, he also knows the human condition and suspects that students and writers will be able to craft a workaround. The question then is: Would that be a bad thing? As one AI advocate tells her students, we already use AI in our everyday lives when searching for information, even writing basic correspondence, or checking that our grammar is up to snuff.

Could use a chatbot to get the ball rolling on writing be all that bad?

Food For Thought

Well, that depends on where this all goes. It has only taken a few short years for AI to go from basically writing “word salads” to generating naturally sounding written text with complex ideas and proper grammar. August believes that once an AI is trained with more data sets to provide the proper perplexity and burstiness, it will evolve to the point that tools won’t be able to catch them in the act.

Then again, tools like GPTZero will also have to get smarter, evolving to keep up with how well AI-driven chatbots learn to craft the written word. So, while writers may be worried about becoming obsolete in a world that’s fallen in love with artificial intelligence, there is some pushback that can at least attempt to keep it all in check.

Will a world that loves technology resist welcoming our new AI-driven overlords before it's too late? What do you think? Let us know in the comments!

Source: NPR