How Cutting-Edge ILM Technology Brought 'The Mandalorian' to Life

What is StageCraft and how did it help The Mandalorian come to life for Disney+?

If you watched The Mandalorian, you know the best part about it was the special effects. Everything looked real. It was a level of authenticity people were not used to seeing on television. Scenes popped off the screen and transported you into new worlds of adventure.

But what was showcased on The Mandalorian was a long time in the making.

It was actually a technique started on Rogue One and has evolved with every Star Wars film since then.

They call it "StageCraft" and it has revolutionized the way we set up and shoot. It's the biggest thing since the invention of blue and green screen and incredibly important to the future of film and television.

So what is it?

How Cutting-Edge ILM Technology Brought 'The Mandalorian' to Life

To understand this complex leap in technology, let's jump into the past.

While shooting Rogue One, director of photography Greig Fraser, ACS, ASC, ran into some problems. The cockpit scenes were not turning out the way he wanted, so he came up with the idea of shooting cockpit scenes using an LED screen that displayed the exterior space environments.

Why was this such a great idea?

Well, the process allowed cinematographers to capture all the special effects in-camera, which is what makes things look real. It would also allow for interactive light and reflection effects happening in real-time. That means there would not need to be effects added in post by Industrial Light & Magic (ILM).

So, it was full of wins for every team involved in the movie.

This was an impressive windfall, and soon it was sent all the way up to the ILM VFX supervisor Richard Bluff, along with associate Kim Libreri and ILM creative director Rob Bredow.

As you know, ILM prides itself on being involved in the cutting edge of this kind of tech. Their conversations with Fraser impressed them. And from this one random thought to shoot cockpits better, they asked one big "what if."

What if you used LED lights and effects in a bigger environment, say, to stage an entire landscape or environment?

Implementing StageCraft in a Galaxy Far, Far Away...

So, Greig Fraser sat with ILM and they formulated a plan. They talked about trying something on this scale on their new TV show, The Mandalorian, mostly because they knew its creator would be game.

As Fraser explains, “Jon [Favreau] was adamant that due to the scope and scale of what would be expected from a live-action Star Wars TV show, we needed a game-changing approach to the existing TV production mold. It was the same conclusion George [Lucas] had arrived at more than 10 years ago during his TV explorations, however, at that time the technology wasn’t around to spur any visionary approaches."

Well, now they had the vision, visionary, and technology.

So how did they bring it all together?

Using Unreal Engine

Video games are constantly pushing the envelope when it comes to motion graphics. While the characters they put forward are animated, they're becoming more and more "real" every day.

So when they pitched using the popular game engine Unreal Engine by Epic Games to support the backdrops in creating a reality with practical effects, Favreau was excited and onboard for the adventure.

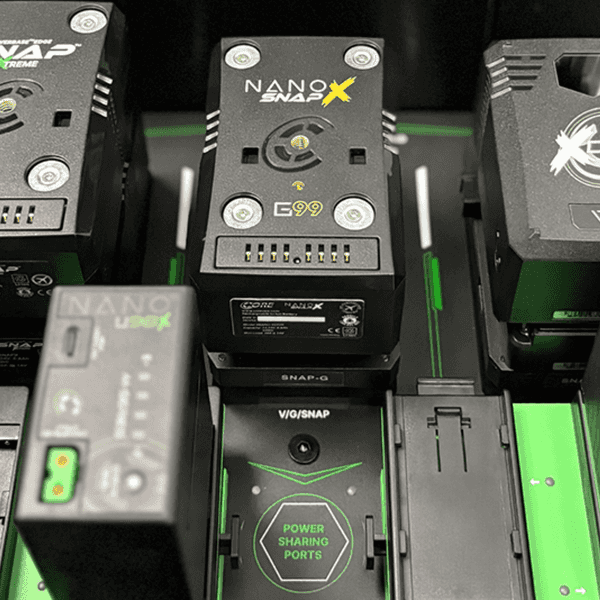

The tool created allowed for real-time display on LED screen walls.

The prototype wall had a 35-foot-wide capture volume. It was covered with 2.8-millimeter LED panels for the utmost precision.

Fraser told ICG magazine, “The results on screen have a lot less moire, which is the trickiest part of working out the shooting of LED screens. If the screen is in very sharp focus, the moire can come through. That factored into my decision to shoot as large a format as possible, to ensure the lowest possible depth of field.”

To capture this background, they shot with the ARRI ALEXA LF. The reason was that Panavision built the Ultra Vista lenses that had a fast fall-off, allowing them to duck the moire issue and to shoot anamorphic.

They used Panavision's prototype lenses for the 75mm 100mm. And they also used T2.5 50mm, 65mm, 75mm, 100mm, 135mm, 150mm, and 180mm lenses to cover every focal length.

Fraser said, "Combining the LF sensor with the 1.65 squeeze on the Ultra Vistas, you get a native 2.37 [ aspect ratio]. Those lenses have a handmade feel in addition to being large format, and a great sense of character. We didn’t use any diffusion filtration at all.”

But even with all this testing, they still needed StageCraft to be greenlit.

Greenlighting StageCraft

There was a lot of concern around the project that it was going to be expensive and look cheap. We know now that that was not the case, but in a universe determined to mix the practical and digital, it felt like everything was at stake.

Lucky for them, and us, ILM greenlit the project after seeing it in action.

But that was not the hardest battle.

That came in mixing tech from a ton of different outside sources for one internal strategy.

ILM creative director Matt Madden, who served as The Mandalorian’s virtual production supervisor, says “UE machines handled all content rendering on the LED walls, including real-time lighting and effects, and projection mapping to orient the rendered content on each LED panel, deforming content to match Alexa’s perspective. For each take, the StageCraft operator was responsible for recording the slate and associated metadata that would be leveraged by ILM in their postproduction pipeline. ILM developed a low-res scanning tool for live set integration within StageCraft, taking advantage of Profile’s capture system to calculate the 3D location of a special iPhone rig, recording the live positions of a specific point on the rig to generate 3D points on live set pieces. The Razor Crest set, a partial set build, used this approach, with the rest of the ship a virtual extension into the LED wall. Controls on an iPad triggered background animation, giving the impression the physical Razor Crest set was moving. Once imagery had been rendered for each section of LED wall, the content was streamed to the Lux Machina team, which QC’d both the live video stream and the re-mapping of content onto walls, [which] Fuse Technical Group operated and maintained.”

You want to know something nuts?

They were able to control all of this remotely, using just an iPad.

The team that controlled all of this was called the "Brain Bar."

To pull off the rest of the shoot, they came up with a new way to do business on set.

Let's got through some steps:

- The Brain Bar loads a 3D environment matching the physical set build into Unreal.

- They use an iPad containing a UE4 interface that allowed us to remotely update the LED wall imagery

- Go over the look with the DP

- Dial in the position and orientation of the virtual world for the first camera setup

- Adjust lighting based on notes from the DP

- Make final adjustments to the 3D scene itself based on input from the VFX super (such as color-correcting the dirt on the virtual ground to match the physical set)

Sheesh.

StageCraft in Action

Series Director of Photography Barry “Baz” Idoine was used to challenging shoots, but nothing could prepare him for The Mandalorian. Mostly because everything they were doing was new.

He told ICG, “Seeing this 3D rear-projection of a dynamic real-time photoreal background through the viewfinder is tremendously empowering. It’s phenomenal because it gives so much power back to the cinematographer on set, as opposed to shooting in a green screen environment where things can get changed drastically in post.”

They mess up, make changes, and do reshoots, but they're all in this together. And the team embraces that.

So many teams have to come together to get this kind of tech to work on screen, but everyone involved understands that they're doing something new, cool, and important.

They're proud.

Fraser resoundingly supports them working in uncharted territory. “Technology serves us; we don’t serve it. So, it doesn’t make sense, to me, to embrace something just because it is new. But if it can help us do things as well as if we were doing it for real, but more economically, that makes good sense. Every day [on The Mandalorian] we were making decisions on how to go forward with this process, so it was like history evolving as we worked. Each of the various departments works independently, but at the end of the day, they had to stand together.”

Where is StageCraft Going?

As Unreal Engine gets better and better, there's no telling where they can take the tech. As far as The Mandalorian goes, the plan is to try it on more character works. Right now the engine isn't great at doing masses of people. They'd like that to change.

They also think the tech can be hard to change when the story changes. Right now, visuals get approved months in advance, so if the story changes or ideas change, that can be a big setback.

Still, every day the tech gets better and more powerful.

Season one challenges won't be the same in season two or beyond.

Count me in as one of the most excited to see how this is used in Star Wars and across all Disney's franchises.

It's an exciting time to be a movie and TV lover.

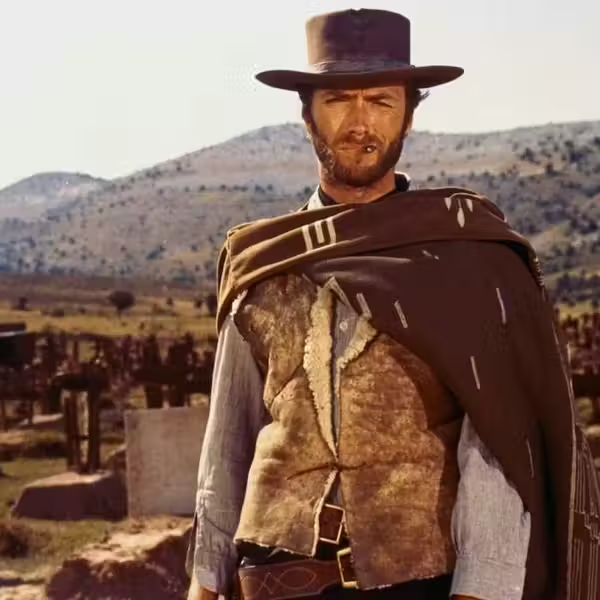

What's next? Is the Mandalorian a Western or Samurai TV Show?

The Mandalorian is all the rage on Disney+, but there's a growing debate over whether or not it's a western or samurai show. So which is it?

Click to find out.