Sony and Epic Games Are Changing Virtual Production, and This Matters to All Filmmakers

The incredible resolution, dynamic range, and color accuracy of Sony’s Crystal LED displays come together with Unreal Engine to create realistic images that empower the filmmaking community.

Camera technology has made leaps and bounds in the past 15 years. The Canon 5D rocked the filmmaking world when it allowed creatives to use a full-frame sensor with Canon’s entire line of EF lenses. Since then, 4K has become a standard thanks to Netflix, and filmmakers can now shoot at 8K and even 12K resolutions for around $5,000.

But another major change is on the horizon. Virtual productions that either shoot in a volume, like Disney’s The Mandalorian, or use large LED panels in lieu of green or blue screen fabrics are quickly becoming a popular alternative.

What Is a Virtual Production?

Most modern films, TV shows, and some commercials will shoot on a soundstage where every detail can be controlled. Everything from lighting to production design is carefully crafted and made to be repeatable.

While shooting on location is also an option, most of the time it is financially beneficial to shoot on a soundstage. Creatives aren’t bound by the weather, constantly changing light, and a myriad of other on-location issues.

At times, a green screen is also used in a soundstage environment to supplement practical sets with VFX elements. Other times, everything is shot with a green screen backdrop that is replaced with digital effects or background plates shot on location.

But these green screen workflows have their limitations. Matching lighting that was shot on set with VFX elements can be tricky and time-consuming. It can also be expensive in post-production to make the compositing seamless.

This is where a virtual production can mitigate these issues.

By using high-resolution LED displays, filmmakers are able to project VFX elements and background plates in real-time right onto a practical set. There is no need to key out a green screen. The color-accurate light from the LED panels does a lot of the lighting work for cinematographers as well and actors also benefit from the real-time visual elements that surround them instead of just imagining them. The days of tennis balls on a stick might be a thing of the past for some productions.

Oblivion director Joseph Kosinski created a similar production environment using rear-projection when filming the Sky Tower scenes.

Sony’s Push for Virtual Production

Virtual production technology is still in its infancy, and some of it is even custom-built for each production. But Sony is stepping up to the plate to create dedicated products for filmmakers.

Sony’s new Crystal LED B-Series was purpose-built for virtual productions in close collaboration with Sony Pictures Entertainment and the filmmaking community.

These high-resolution displays are focused on accurate color reproduction, dynamic range, and high resolution to make anything the camera records seem like the real thing.

Sony also has dedicated display controllers that give creatives a prebuilt pipeline to project their digital environments or background plates of real locations. There is no need to build your own tech to achieve your creative vision.

Virtual production workflows also have another amazing benefit over traditional green screens.

Motion tracking.

This is where a special 3D tracker is placed on the camera that captures its movement in 3D space. This data is then interpreted in real-time, allowing filmmakers to match the background elements of their LED volume or wall to their camera motion.

But the hardware is only half the battle. This is where Epic Games comes in.

Sony and Epic Games

It might seem strange to see a gaming company take part in the entertainment industry, but Epic Games has some incredible technologies that filmmakers are able to tap into.

Specifically the Unreal Engine and Quixel Megascans.

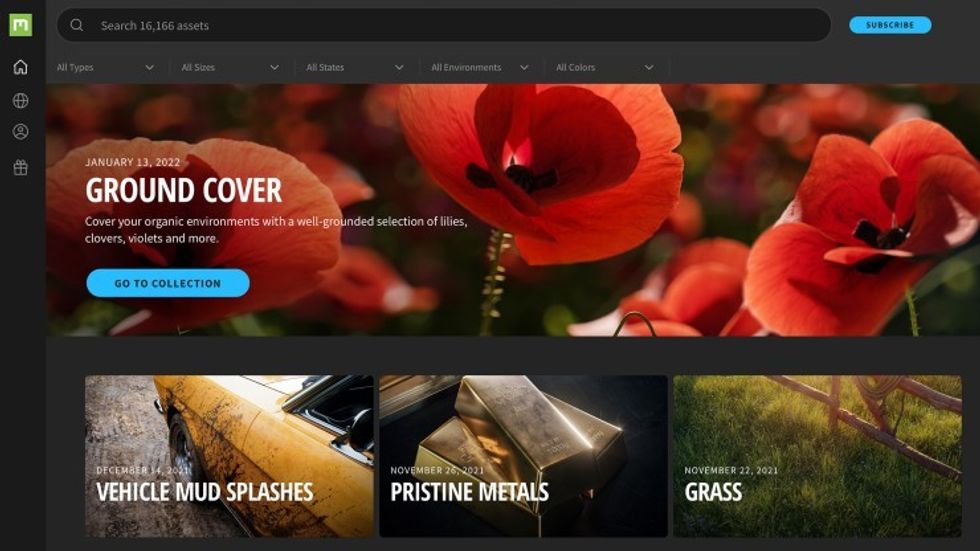

Unreal Engine is a software package that helps creatives develop 3D or 2D video games. Quixel Megascans, a fairly new acquisition by Epic Games, is a library of high-resolution Photogrammetry elements. These are VFX elements created from hundreds of individual pictures.

When these pictures are combined into a 3D element, they create virtual reproductions of real-life objects. This includes things like rocks, foliage, urban clutter, and anything else you can point a camera at. See that picture of leaves below? None of that is real.

Filmmakers are now using the Unreal Engine to create virtual backdrops that are then projected in a volume of LED panels. These backdrops can then be manipulated, lit, and redesigned in real-time.

This sort of technology is an incredible milestone for filmmakers, but unfortunately, it may be out of reach for budget creatives. But that hasn’t stopped some tech-savvy artists from tapping into this type of technology

Virtual Production on a Budget

Both FXhome and Film Riot have done some incredible work that mimics the fundamentals of virtual productions. While they aren’t using the most up-to-date technology, it is incredible to see this creative bunch push the boundaries of budget filmmaking.

FXhome filmed on an Apple iPad with CamTrackAR to capture motion data, filmed their subject on a green screen, and then used Blender and HitFilm to create their final composition. Check out the video below for their full tutorial.

Film Riot took a different approach and used a high-resolution projector to create their background environment. Much like a virtual production volume, or the Oblivion set, this approach gave them some amazing results. Check out their process in the video below.

The Future of Virtual Production

As companies like Sony and Epic Games make dedicated tools for virtual production workflows, it will become easier for filmmakers to make their creative vision reality.

While the fancy toys will be out of reach for most budget creatives, the technology always finds a way to trickle down—unlike in the economy, unfortunately. (At least we can thank Reagan for the concept, right?)

Whatever the future holds, we’ve come a long way since matte painting on glass, and we at No Film School are eager to see how movies and TV shows evolve in the future with this new tech.