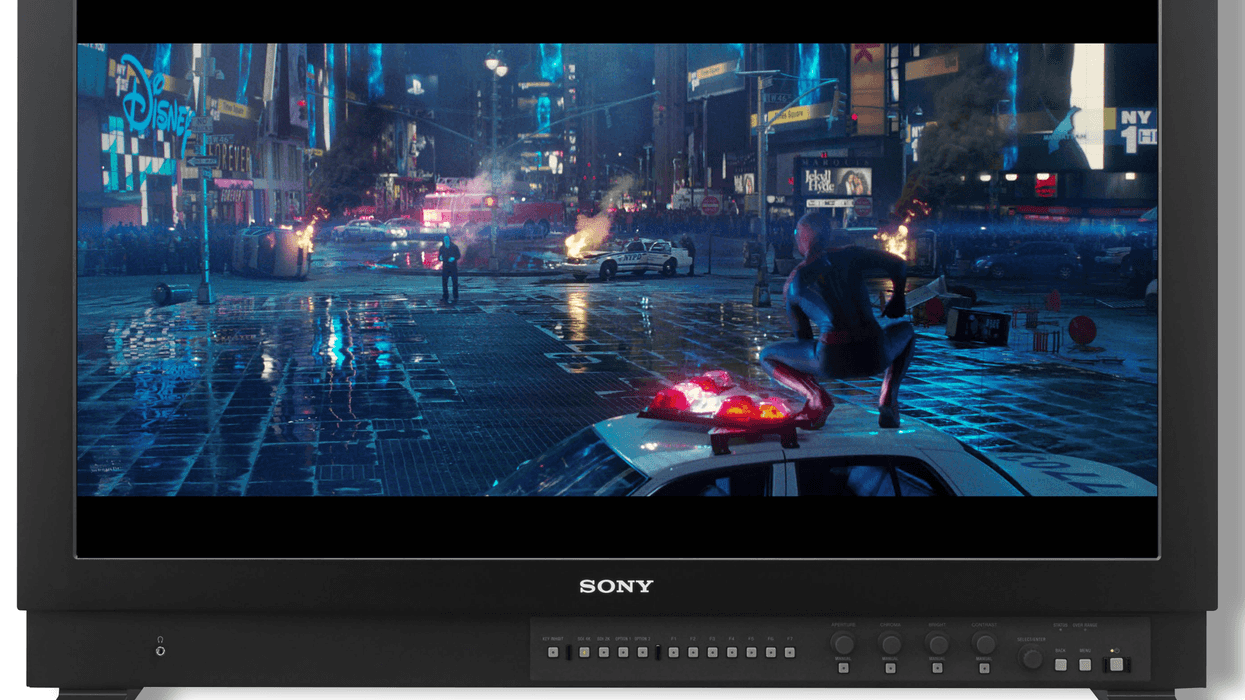

Sony Wants to Make HDR Painless—Here's How

Sony presented its system for making the transition to HDR content as painless as possible for broadcasters, with results that should have ripple effects into the film community.

New workflows can be tremendously painful for all filmmakers, but the struggle is particularly complicated for live broadcast. While upgrading from HD to 4K can be a real headache in narrative post (quadrupling render times), you can always leave a render running overnight. In live events, however, everything needs to be processed and delivered in real-time.

As we transition to an HDR marketplace, Sony is making efforts to find methods for HDR content capture and delivery that don't require massive capital outlays or redundant architecture. The goal: instead of requiring an HD truck, a 4K truck, and a 4K HDR truck, you can have a single post truck working with a single set of cameras to capture all three formats simultaneously.

If you shoot Sony, the Slog3 to HDR workflow is good to know.

On the NAB stage in New York this week, Sony Broadcast and Production Division Chief Technology Officer Hugo Gaggioni presented the company's system for integrating HDR capture into the broadcast landscape.

The first hurdle to overcome was settling on sensor size. While most filmmakers push for larger sensors—which offer better low light performance due to their larger photosites—live event cinematography tends to remain in a 2/3" chip universe.

Not only does the smaller sensor size offer a larger depth of field in the same field of view (making focus easier during hectic events like sports), it also allows for the use of a tremendous zoom lens— sometimes with as much as an 80x zoom range—which would be prohibitively heavy and expensive to develop for Super 35 use.

This pushed Sony to instead focus on methods for capturing the necessary latitude required for HDR on the 2/3" sensors they had—specifically, the popular HDC-4300 camera they were already using to capture live events in 4K.

If you master your project in HDR for theatrical, you'll likely be making decisions about PQ or HLG encoding for your broadcast HDR release.

Sony's solution was to use Slog3, a previously developed Sony technology, to best take advantage of both the capture characteristics of the camera and the distribution formats available for HDR.

Interestingly, the HDR standard, Rec. 2100, allows for two competing HDR workflows: perceptual quantization (PQ) and hybrid log gamma (HLG). Sony tested traditional HLD workflows and found that the resulting HDR image was of great quality. However, for home viewers who only had SDR sets, HLG images looked too dim.

In Sony's internal testing, the company discovered that the aggressive curve of Slog3 created an image that worked for both SDR and HDR imagery— and was compatible with the HLG broadcast spec. They developed a BPU (baseband processing unit) capable of delivery simultaneous HDR and SDR signal that looked good in both formats. It came directly from a single camera source, requiring no additional processing.

For better or worse, broadcast standards end up impacting us all.

How does this affect filmmakers? For better or worse, broadcast standards end up impacting us all, since many of our projects designed for theatrical release end up living most or even all of their lives in the broadcast arena. Thus, if you master your project in HDR for theatrical, you'll likely be making decisions about PQ or HLG encoding for your broadcast HDR release. And if you shoot Sony, the Slog3 to HDR workflow is good to know.

Additionally, as Sony works to integrate this workflow solution into more of its cameras, many cameras that straddle the divide between broadcast and narrative work (such as future updates to the FS7 line) will end up integrating technology developed for the live event world. Sony's solution might also work as a more cost-effective method for low-budget filmmakers who want to easily deliver HDR and SDR masters of their projects without spending the expensive time in a color suite mastering both versions separately.