HP Z8 Desktop: A Fast On-ramp to Real-time Production at Area of Effect

AOE is driving a VFX renaissance in film and television production by embracing emerging technology

Z8 Desktop Workstation

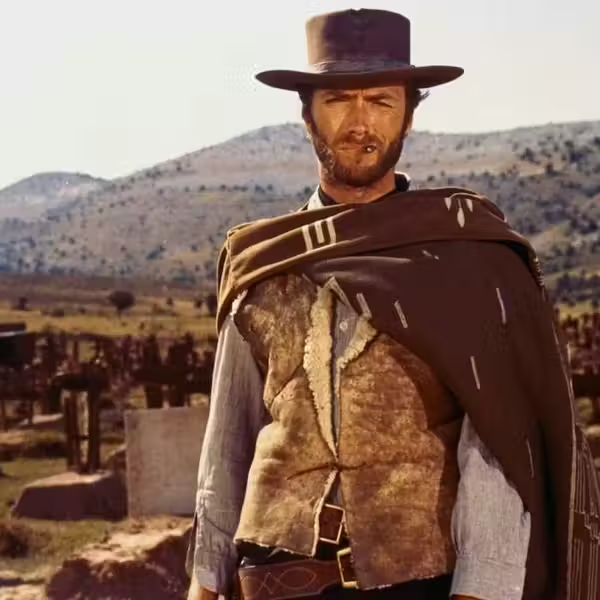

Whether you call him a fixer, a generalist, or your own personal strike team, Real-Time Producer Bryce Cohen is the guy you want if you’re in the middle of a virtual production shoot and need to deliver high-quality content fast. That’s his primary role at Area of Effect Studios (AOE), a full-stack VFX and virtual production studio specializing in innovative visualization.

Bryce Cohen

Credit: Bryce Cohen

AOE has set its sights on driving a VFX renaissance in feature film and television production through using cutting-edge gaming technology. It’s also the creative sandbox for Academy Award-winning VFX Supervisor Rob Legato, an executive at the studio, and the virtual production arm of game development studio Rogue Initiative, where Director Michael Bay is a Creative Partner.

In its mission to change the way content creators think about the entire pre- and post-production process, Area of Effect grabbed the opportunity to work on the HP Z8 desktop workstation, provided by HP and NVIDIA. Supporting up to 56 cores in a single CPU and high-end GPUs, the workstation delivers the faster frame rates and overall visual quality that make new in-engine workflows palatable to experienced filmmakers. The Z8 at AOE is equipped with two NVIDIA RTX™ A6000 graphics cards.

As Cohen says, “The Z8 helps remove some of the roadblocks that established creatives in the industry would initially have toward emerging real-time pipelines for creating the content they've been making forever.”

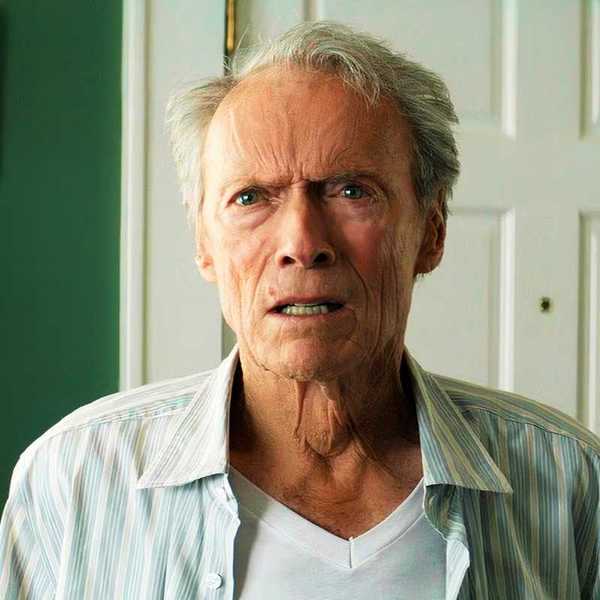

Bryce Cohen and Case-Y

Bryce Cohen

Accelerating in-engine work for film, television, and immersive productions

One re-imagined workflow that AOE executes routinely now is in-engine VFX pre-visualization using Epic Games’ Unreal Engine. They have applied the technique on the recent Apple TV film Emancipation, starring Will Smith, among others.

“There's a great argument to using Unreal for previs,” said Cohen. “You can see how big an explosion will be in-frame in-engine quickly and not have to hire a whole team of people to make sure no one gets hurt. But while the technology is capable of doing that, if we put a VR headset on an A-list director to do a virtual location scout and they’re getting five frames a second, they’re going to throw the headset off because it’ll make them sick. It's not a worthwhile venture for them and they’ll never want to do it again.”

Easily expand the Z8 G5 as your work evolves.

Having the Z8 handle the task changes the equation. Said Cohen, “The Z8 can pretty much crunch whatever I throw at it. I will unplug the Z8 and cart it over to the director, plug it in, and they’ll be ready to go. Whether the project's performing or not, the Z8 will be.”

AOE has also produced real-time facial and body motion capture in realistic environments to create high-quality digital humans for a client demonstration. They’ve also produced real-time musical performances where a live artist performs onstage alongside a virtual character, and they continually produce proof-of-concepts and game demos for investors that depend on the advanced performance of the Z8.

“For those projects where there’s a high-pressure environment and nothing can go wrong, we take that HP Z8 workstation with us,” added Cohen.

The Z8 G5's Front I/O

Powering experimentation with NeRFs

Beyond films, episodics, and games, AOE invests time in interesting side projects where they get to test their tools and production workflows on a small scale, then apply that learning to their long-form work. They have created several in-engine music videos and experimental projects for artists represented by Loud Robot, a joint venture between RCA and JJ Abrams’ production company Bad Robot.

One of these projects involved producing content around a virtual character for electronic duo Milkblood. The character, Case-Y, was part of a world-building narrative around the duo. For the music video, AOE created the character’s movement using a motion capture suit, then placed it into different virtual environments in Unreal Engine. The videos were delivered across platforms like YouTube and TikTok.

After the project was finished, with the permission of Milkblood and Loud Robot, Cohen launched an experiment. “Having Case-Y living on the computer in my garage gave me an idea. It was impossible for me to bring him into my actual space, but what if I could bring my world to him in his virtual space? I had been looking for an excuse to learn NeRFs, and this was it.”

NeRFs are neural radiance fields -- fully connected neural networks that can automatically build 3D representations of objects or scenes from 2D images using advanced machine learning. They’re an emerging technology that enables real-world objects and locations to be recreated in a digital space.

Cohen explained, “Pre-built digital environments have to be purchased, and the realistic-looking ones take up a ton of storage on your computer. While we were working on the project, I wondered, ‘What if I didn’t have to pay for environments and got them to look uncannily real? What if they were also places people might recognize?”

To test his idea, Cohen learned how to make a NeRF from a YouTube tutorial -- by walking around the space with his iPhone taking pictures, uploading them to NeRFStudio where they were processed, exporting the NeRF into Unreal Engine. Once he had everything on the Z8 workstation -- the actual environment and the digital Case-Y, he was ready to launch the experiment.

A power glitch in the area delayed the project and he took the files to a consumer-level workstation in another location. “I could see that the environment was definitely there, but it was getting maybe two frames per second maximum. I’d put an input for a movement on the computer and wait three seconds and see the movement happen. It looked good but was totally unusable.”

Comparison of Cohen's view and Case-Y's NeRF world.

Bringing the project to the Z8 workstation was a completely different experience. “Everything ran smoothly. I knew what this character was doing. I know where he was in the scene. I was able to experiment with camera movement, making it feel real, and blending the character into the real world because I was getting about 40 frames per second. I would have gotten more, but as it was my first time creating a NeRF I had made it much bigger than what was advised.”

To blend the character with the world, Cohen walked around the physical space and recorded his movements on his phone, using Glassbox Dragonfly, which takes that location data, brings it into Unreal Engine and lets the user see what the phone is seeing. He mounted a GoPro camera to the front of the phone to see what his movement looked like in the real world, recorded that movement, and put it into a virtual copy of the real world to build the scene.

“If you're trying to use a 3D environment of a NeRF, or if you're trying to import a NeRF into Unreal, and then use it to get real-time feedback of even just your movement inside that environment, you're going to need a really powerful computer. You could scale down the NeRF's quality, but the whole point of using a NeRF is to make something that feels realistic. It just wouldn't have if I didn't have the Z8 workstation.”

That kind of bold experimentation is what AOE is all about -- driving content creation forward by helping filmmakers make discoveries and use technology to bridge the physical and virtual worlds. And if they find themselves in a tight spot, well, they can always call on Cohen. He’ll bring his Z8 workstation.